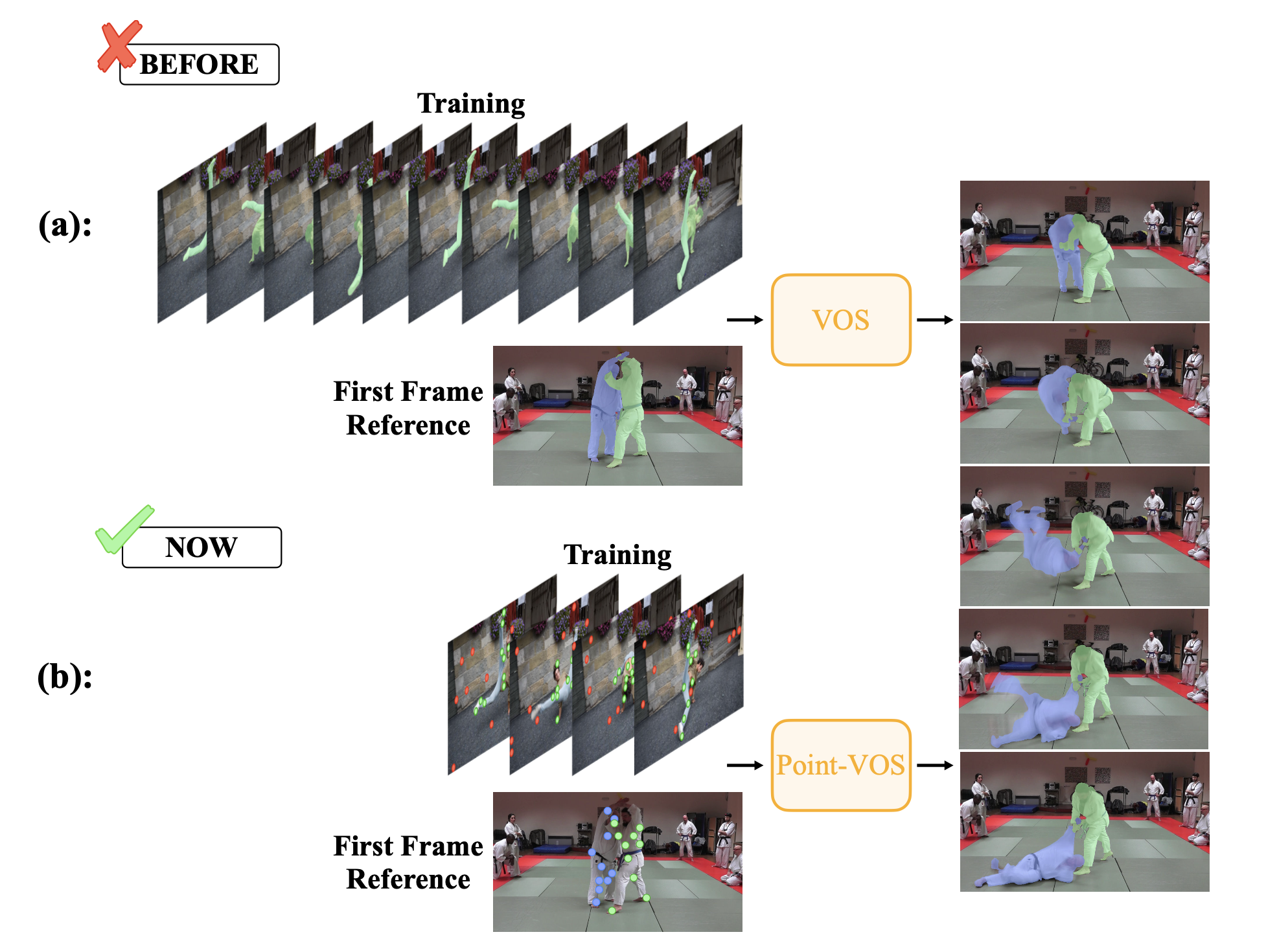

Current state-of-the-art Video Object Segmentation (VOS) methods rely on dense per-object mask annotations both during training and testing. This requires time-consuming and costly video annotation mechanisms. We propose a novel Point-VOS task with a spatio-temporally sparse point-wise annotation scheme that substantially reduces the annotation effort. We apply our annotation scheme to two large-scale video datasets with text descriptions and annotate over 19M points across 133K objects in 32K videos. Based on our annotations, we propose a new Point-VOS benchmark, and a corresponding point-based training mechanism, which we use to establish strong baseline results. We show that existing VOS methods can easily be adapted to leverage our point annotations during training, and can achieve results close to the fully-supervised performance when trained on pseudo-masks generated from these points. In addition, we show that our data can be used to improve models that connect vision and language, by evaluating it on the Video Narrative Grounding (VNG) task.

@inproceedings{zulfikar2024point,

author = {Zulfikar, Idil Esen and Mahadevan, Sabarinath and Voigtlaender, Paul and Leibe, Bastian},

title = {Point-VOS: Pointing Up Video Object Segmentation},

booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2024}

}